From Extension to Ecosystem: Mastering Quality in Polarion Development

Stability is trust. Discover how I built an AI-powered release platform to conquer Polarion’s toughest UI challenges and why my new roadmap starts with the Public Code Editor release.

In the fast-paced world of software requirements and ALM, we often talk about "features." But as any seasoned developer knows, a feature is only as good as the trust you can place in it.

Following the initial release of my Code Editor Extension for Polarion, I received fantastic feedback. Instead of rushing headlong into the next big project (like the highly anticipated Permissions Plugin), I decided to listen to the feedback and double down on what matters most: Stability, Reliability, and Quality.

Today, I’m excited to show you how I transformed a standalone extension into a rock-solid, AI-supported release platform.

The Quality Shift: Why "Good Enough" Isn't Enough

When we build extensions for a core system like Siemens Polarion, we are touching the backbone of many organizations' development processes. A bug here isn't just an inconvenience; it can disrupt entire workflows. To ensure that every future update is "bulletproof," I’ve implemented a multi-layered testing and release strategy.

1. The Testing Foundation: Beating the "Polarion UI Challenge"

Manual testing is the enemy of scale. However, anyone who has tried to automate Polarion knows it's no small feat. The architecture presents unique "nightmares":

- Cryptic & Dynamic IDs: Elements lack stable identifiers or change with every build.

- The iFrame Maze: Content is often deeply nested, making it "battled" territory for standard tools.

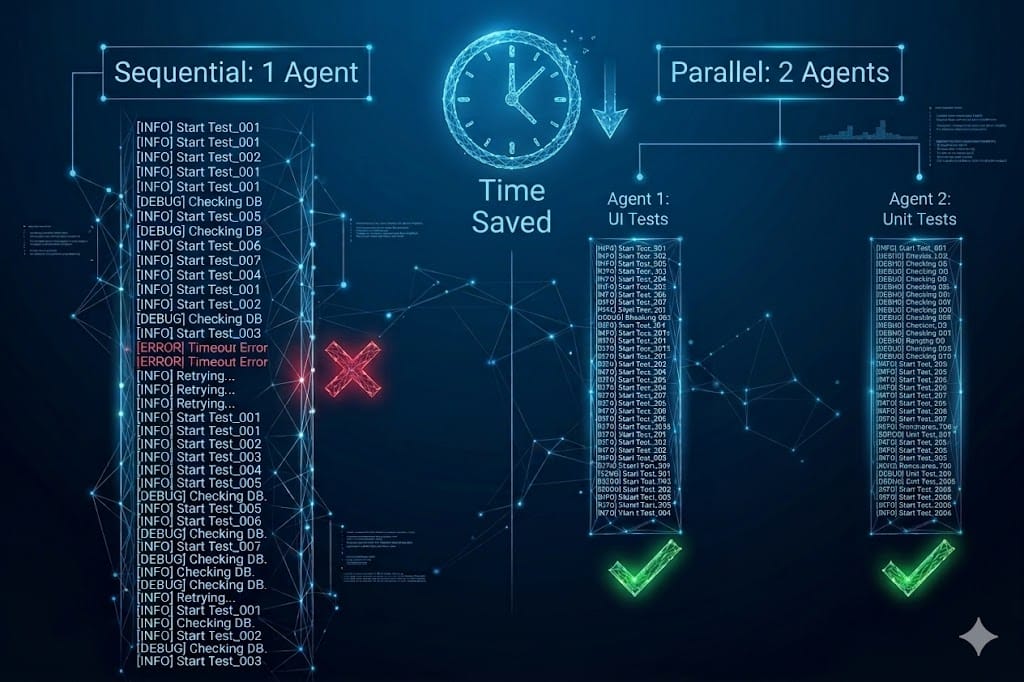

- Parallelization Conflicts: Running multiple tests at once is the "Holy Grail," but it often leads to session conflicts and account issues.

The Solution: I’ve implemented two critical layers:

- Unit Tests (Java): Ensuring backend consistency.

- UI Tests (Playwright): Simulating real user interactions.

The AI Twist: Leveraging AI-driven tools (like MCP) allowed me to generate the base coverage for these tests almost instantly. By feeding the messy DOM structure into the AI, it generated resilient test scripts that find the "unfindable" elements, providing a safety net for all future refactoring.

2. The Release Pipeline: Automation in Action

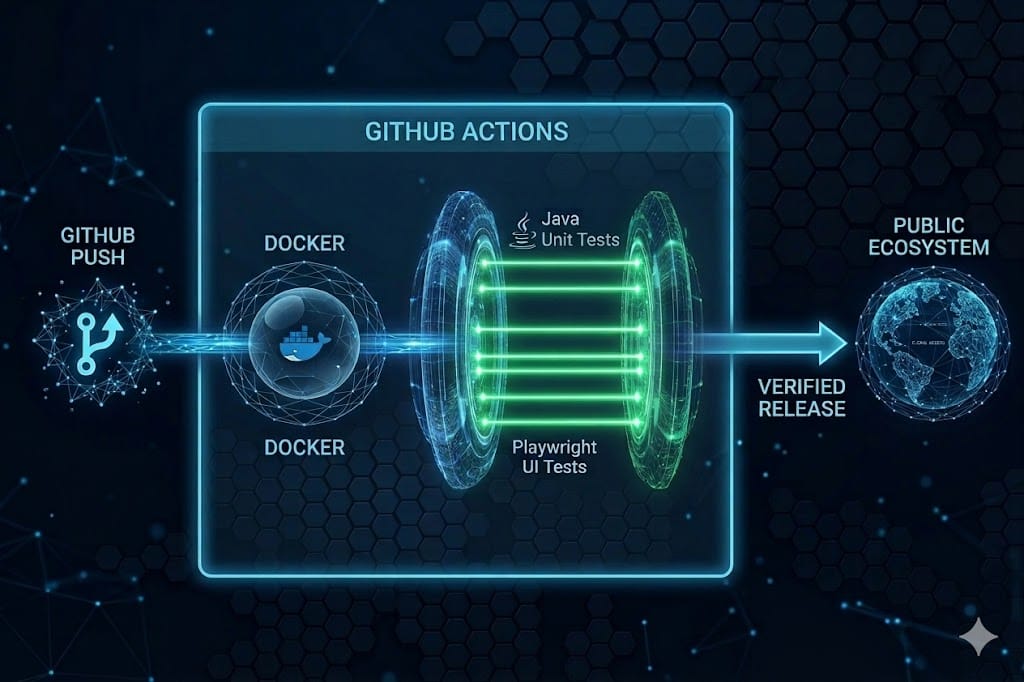

I’ve moved the entire build process to GitHub Actions. Whenever I push code:

- Unit Tests run.

- A Docker Container with a full Polarion instance is spun up.

- The extension is deployed and tested via Playwright.

This automation allows me to develop features and fix bugs at an incredible speed while guaranteeing that everything still works as intended.

The Roadmap: Building a Public Ecosystem

My goal is to provide a solid foundation for the Polarion community. Here is how I will proceed:

- Public Code Editor (Coming Soon): My first priority is moving the Code Editor Extension to a public repository. It is now stable and backed by a rigorous test suite.

- The Custom Permissions Plugin: With the infrastructure in place, I will finally tackle this complex challenge.

- The Release Blueprint: Once the products are out, I will look into sharing my optimized release and testing workflows as a "blueprint" for the community.

A Note on Open Knowledge vs. Expert Implementation

I believe in sharing knowledge and providing public extensions to move the community forward. However, please understand that while the tools are public, the "secret sauce" - the specific AI-agent setups, the deep Docker orchestration, and the architectural skills to scale these systems cannot be fully shared in a single repository.

Need a deeper dive? I am creating the foundation for everyone to use, but I know that every organization has unique use cases. If you find that the public tools are a great start but you need a more tailored, professional implementation:

Let’s get the conversation started!

I’m curious about your experiences with Polarion development and automation:

- The iFrame Struggle: Have you found a different way to handle the nested structures in Polarion UI tests, or are you still fighting "flaky" tests?

- AI Integration: Are you already using AI agents or LLMs to assist in your ALM workflows, or is it still a manual world for you?

- Release Workflows: Is automated testing via Docker part of your current pipeline, or is "manual deployment" still the standard in your team?

Drop your thoughts in the comments – I’d love to hear your approach!